Recently I’ve gotten fascinated with a game called Werewolf – which is based a similar social deduction game “Mafia”. Players have limited information and have to talk amongst themselves to try and figure out who did what to whom, and who knew what when, before time runs out.

This is all an outgrowth of my decades-long obsession with speech driven entertainment. Modern players are increasingly drawn to games that talk back. With the advent of real-time text-to-speech rendering and emotionally adaptive voices, a new class of voice-forward games can deliver immersion and intimacy unmatched by traditional click-through dialog trees. This framework delivers up-to-the-minute world-aware, character-centric dialog, allowing each NPC to feel alive, distinct, and self-aware.

Social Deduction Games are interesting. A number of studies have been released that look at Werewolf and games like it as a measure of how effectively LLMs can keep track of imperfect information and reason out solutions amongst themselves. Games like Werewolf, Mafia, and Spyfall depend on the core tension between what players know and what they pretend they know. Modeling this requires support for misinformation, asymmetric perception, and role-aware deception. This enables each character to operate within their own worldview – capable of lying convincingly or expressing genuine uncertainty – all while maintaining logical consistency with prior actions. They’re not perfect, but the debate can take surprising turns on occasion.

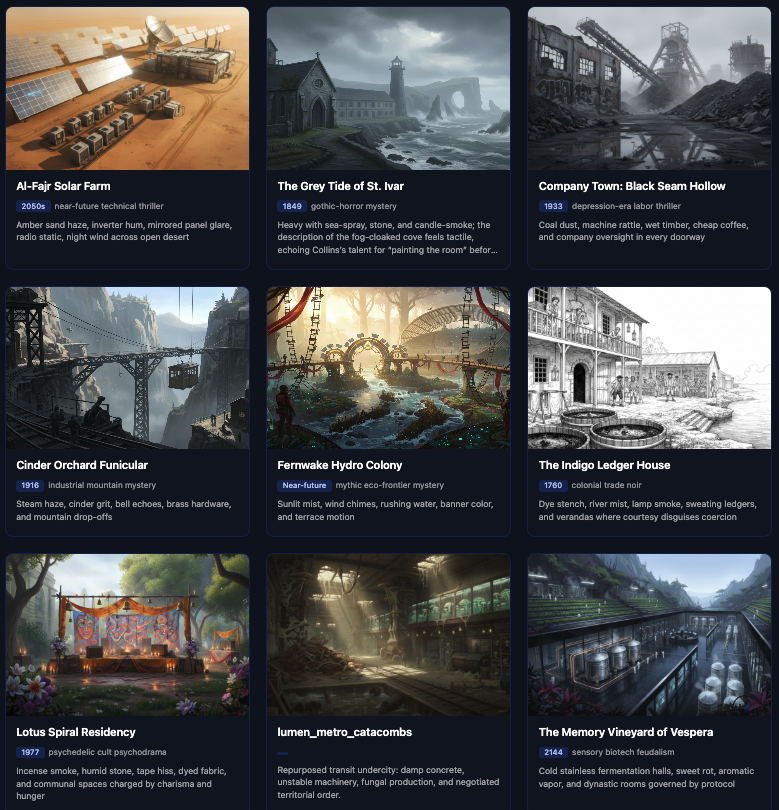

The beauty of the simple Werewolf mechanic is you can map any number of settings and scenarios onto the framework. In fact, I’ve developed a sort of “Rashoon Engine” based on a production pipeline that proposes a setting, and crafts a story that forms the spine of the experience. I’ve been playing around with the “once upon a time”, “until one day”, “and because of that”… story model based in part on one of the Pixar story rubrics.